1. yaml 파일 올리기 > 1007 최종 YAML 파일

2. setup > mobaXterm EC2 접속

➜ [root@myeks2-bastion-EC2 ~]# export | egrep 'ACCOUNT|AWS_|CLUSTER' | egrep -v 'SECRET|KEY'

declare -x ACCOUNT_ID="039612877023"

declare -x AWS_ACCOUNT_ID="039612877023"

declare -x AWS_DEFAULT_REGION="ap-northeast-2"

declare -x AWS_PAGER=""

declare -x AWS_REGION="ap-northeast-3"

declare -x CLUSTER_NAME="myeks2" ←– 이 이름이 서로 달라야 role 이름도 달라진다

➜ echo $CLUSTER_NAME root-karpenter-demo

➜ export KARPENTER_VERSION=v0.30.0

➜ export CLUSTER_NAME=myeks2

➜ export TEMPOUT=$(mktemp)

➜ export KARPENTER_NAMESPACE=karpenter

➜ echo $KARPENTER_VERSION; echo $CLUSTER_NAME; echo $AWS_DEFAULT_REGION; echo $AWS_ACCOUNT_ID $TEMPOUT

➜ curl -fsSL https://raw.githubusercontent.com/aws/karpenter/"${KARPENTER_VERSION}"/website/content/en/preview/getting-started/getting-started-with-karpenter/cloudformation.yaml > $TEMPOUT \

&& aws cloudformation deploy \

--stack-name "Karpenter-${CLUSTER_NAME}" \

--template-file "${TEMPOUT}" \

--capabilities CAPABILITY_NAMED_IAM \

--parameter-overrides "ClusterName=${CLUSTER_NAME}"

✅ rollback 뜬 스택 yaml 템플릿 이름 수정 후 다시 스택 올리기 > 23 붙이기

3. Karpenter-myeks2 스택 올리기 > 바탕화면 Karpenter-myeks2 최종 파일

4. eks 배포 > 이대로 명령어 입력

eksctl create cluster -f - <<EOF

---

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: ${CLUSTER_NAME}

region: ${AWS_DEFAULT_REGION}

version: "1.27"

tags:

karpenter.sh/discovery: ${CLUSTER_NAME}

iam:

withOIDC: true

serviceAccounts:

- metadata:

name: karpenter

namespace: karpenter

roleName: ${CLUSTER_NAME}23-karpenter

attachPolicyARNs:

- arn:aws:iam::${AWS_ACCOUNT_ID}:policy/KarpenterControllerPolicy-${CLUSTER_NAME}23

roleOnly: true

iamIdentityMappings:

- arn: "arn:aws:iam::${AWS_ACCOUNT_ID}:role/KarpenterNodeRole-${CLUSTER_NAME}23"

username: system:node:{{EC2PrivateDNSName}}

groups:

- system:bootstrappers

- system:nodes

managedNodeGroups:

- instanceType: m5.large

amiFamily: AmazonLinux2

name: ${CLUSTER_NAME}-ng

desiredCapacity: 2

minSize: 1

maxSize: 10

iam:

withAddonPolicies:

externalDNS: true

EOF

➜ 올라간 스택 확인

➜ go install github.com/awslabs/eks-node-viewer/cmd/eks-node-viewer@v0.5.0

➜ cd ~/go/bin && ./eks-node-viewer

➜ MyDomain=sjh-thrillionx.click

➜ MyDnsHostedZoneId=$(aws route53 list-hosted-zones-by-name --dns-name "${MyDomain}." --query "HostedZones[0].Id" --output text)

✅ echo $MyDomain, $MyDnsHostedZoneId

➜ nano externaldns.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: external-dns

namespace: kube-system

labels:

app.kubernetes.io/name: external-dns

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: external-dns

labels:

app.kubernetes.io/name: external-dns

rules:

- apiGroups: [""]

resources: ["services","endpoints","pods","nodes"]

verbs: ["get","watch","list"]

- apiGroups: ["extensions","networking.k8s.io"]

resources: ["ingresses"]

verbs: ["get","watch","list"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: external-dns-viewer

labels:

app.kubernetes.io/name: external-dns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: external-dns

subjects:

- kind: ServiceAccount

name: external-dns

namespace: kube-system # change to desired namespace: externaldns, kube-addons

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: external-dns

namespace: kube-system

labels:

app.kubernetes.io/name: external-dns

spec:

strategy:

type: RollingUpdate

selector:

matchLabels:

app.kubernetes.io/name: external-dns

template:

metadata:

labels:

app.kubernetes.io/name: external-dns

spec:

serviceAccountName: external-dns

containers:

- name: external-dns

image: registry.k8s.io/external-dns/external-dns:v0.13.4

args:

- --source=service

- --source=ingress

- --domain-filter=thrillionx.click # will make ExternalDNS see only the hosted zones matching provided domain, omit to process all available hosted zones

- --provider=aws

- --policy=upsert-only # would prevent ExternalDNS from deleting any records, omit to enable full synchronization

- --aws-zone-type=public # only look at public hosted zones (valid values are public, private or no value for both)

- --registry=txt

- --txt-owner-id=Z03277773PBC0HOIVXLL5

env:

- name: AWS_DEFAULT_REGION

value: ap-northeast-2 # change to region where EKS is installed

✅ MyDomain=$MyDomain MyDnsHostedZoneId=$MyDnsHostedZoneId envsubst < externaldns.yaml | kubectl apply -f -

5. Set up

ops-view

helm repo add geek-cookbook https://geek-cookbook.github.io/charts/

helm repo update

helm install kube-ops-view geek-cookbook/kube-ops-view --version 1.2.2 --set env.TZ="Asia/Seoul" --namespace kube-system

kubectl patch svc -n kube-system kube-ops-view -p '{"spec":{"type":"LoadBalancer"}}'

➜ kubectl get svc -n kube-system

➜ eks 클러스터 api 서버 엔드포인트 변수로 선언해두기

✅ export CLUSTER_ENDPOINT=https://76F613261E9BBE3064BB8F8DC934F784.sk1.ap-northeast-3.eks.amazonaws.com

✅ endpoint 주소는 EKS > cluster > API 서버 엔드포인트 주소로 확인 가능

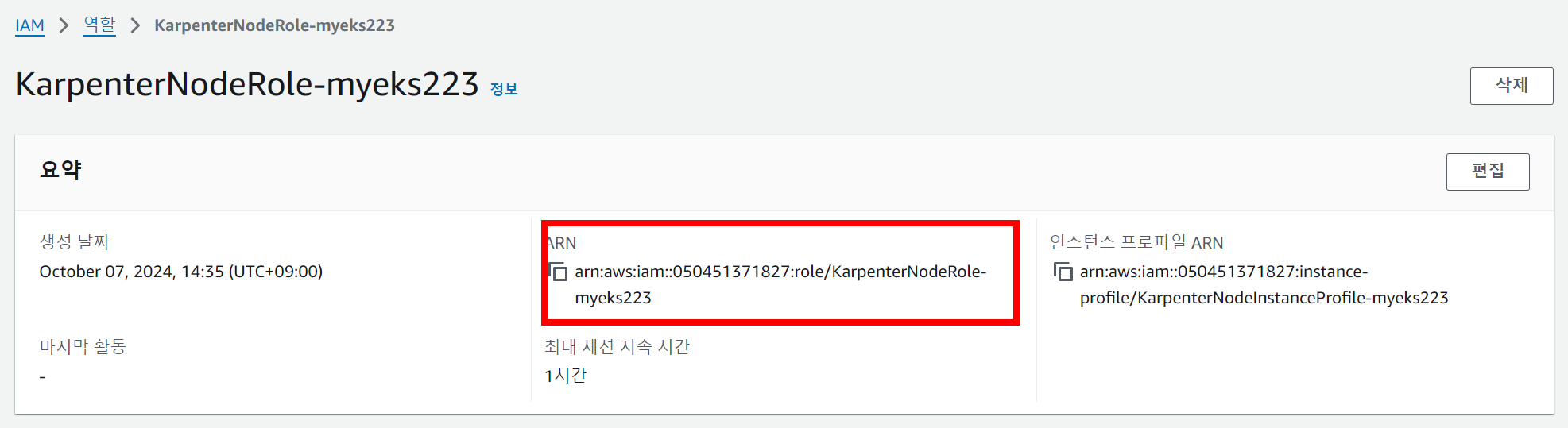

➜ export KARPENTER_IAM_ROLE_ARN=arn:aws:iam::050451371827:role/KarpenterNodeRole-myeks223

✅ ARN은 IAM > 역할 > KarpenterNodeRole::myeks223에서 확인 가능

➜ export KARPENTER_IAM_ROLE_ARN="arn:aws:iam::${AWS_ACCOUNT_ID}:role/${CLUSTER_NAME}-karpenter"

➜ echo $CLUSTER_ENDPOINT; echo $KARPENTER_IAM_ROLE_ARN

6. karpenter 설치

aws iam create-service-linked-role --aws-service-name spot.amazonaws.com || true

helm registry logout public.ecr.aws

helm upgrade --install karpenter oci://public.ecr.aws/karpenter/karpenter --version "${KARPENTER_VERSION}" --namespace "${KARPENTER_NAMESPACE}" --create-namespace \

--set "settings.aws.clusterName=${CLUSTER_NAME}" \

--set "settings.clusterName=${CLUSTER_NAME}" \

--set "settings.interruptionQueue=${CLUSTER_NAME}" \

--set controller.resources.requests.cpu=1 \

--set controller.resources.requests.memory=1Gi \

--set controller.resources.limits.cpu=1 \

--set controller.resources.limits.memory=1Gi \

--wait

➜ T.S

✅ 들어있는 변수가 달랐던 문제

# 확인

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# echo $CLUSTER_NAME

root-karpenter-demo

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# export CLUSTER_NAME=myeks2

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# echo $CLUSTER_NAME

myeks2

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# echo $KARPENTER_VERSION

1.0.6

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# export KARPENTER_VERSION=v0.30.0

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# echo $KARPENTER_VERSION

v0.30.0

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# echo $KARPENTER_NAMESPACE

kube-system

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# export KARPENTER_NAMESPACE=karpenter

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# echo $KARPENTER_NAMESPACE

karpenter

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get pod -n karpenter

No resources found in karpenter namespace.

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get pod

No resources found in default namespace.

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get ns

NAME STATUS AGE

default Active 58m

kube-node-lease Active 58m

kube-public Active 58m

kube-system Active 58m

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get deploy

No resources found in default namespace.

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get pod -n karpenter

No resources found in karpenter namespace.

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get pod

No resources found in default namespace.

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get ns

NAME STATUS AGE

default Active 58m

kube-node-lease Active 58m

kube-public Active 58m

kube-system Active 58m

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get deploy

No resources found in default namespace.

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get clusterrole karpenter-admin -o yaml

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# helm uninstall karpenter --namespace kube-system

release "karpenter" uninstalled

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl delete clusterrole karpenter-admin

Error from server (NotFound): clusterroles.rbac.authorization.k8s.io "karpenter-admin" not found

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl delete clusterrolebinding karpenter-admin

Error from server (NotFound): clusterrolebindings.rbac.authorization.k8s.io "karpenter-admin" not found

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# helm upgrade --install karpenter oci://public.ecr.aws/karpenter/karpenter --version "${KARPENTER_VERSION}" --namespace "${KARPENTER_NAMESPACE}" --create-namespace \

> --set "settings.aws.clusterName=${CLUSTER_NAME}" \

> --set "settings.clusterName=${CLUSTER_NAME}" \

> --set "settings.interruptionQueue=${CLUSTER_NAME}" \

> --set controller.resources.requests.cpu=1 \

> --set controller.resources.requests.memory=1Gi \

> --set controller.resources.limits.cpu=1 \

> --set controller.resources.limits.memory=1Gi \

> --wait

Release "karpenter" does not exist. Installing it now.

Pulled: public.ecr.aws/karpenter/karpenter:v0.30.0

Digest: sha256:6ee7bdbb99510b9037a648ef6fe405f7683a450d47cfda0ea97e24b1416867f0

NAME: karpenter

LAST DEPLOYED: Mon Oct 7 06:42:31 2024

NAMESPACE: karpenter

STATUS: deployed

REVISION: 1

TEST SUITE: None

(bs-sa-user4@myeks2:N/A) [root@myeks2-bastion-EC2 ~]# kubectl get pod -n karpenter

NAME READY STATUS RESTARTS AGE

karpenter-7bbf548c8c-4kx4b 1/1 Running 0 39s

karpenter-7bbf548c8c-7xpjh 1/1 Running 0 39s

cat <<EOF | kubectl apply -f -

apiVersion: karpenter.sh/v1alpha5

kind: Provisioner

metadata:

name: default

spec:

requirements:

- key: karpenter.sh/capacity-type

operator: In

values: ["spot"]

limits:

resources:

cpu: 10000

providerRef:

name: default

ttlSecondsAfterEmpty: 30 # 노드가 비어 있는 상태가 30초 이면 노드 삭제

---

apiVersion: karpenter.k8s.aws/v1alpha1

kind: AWSNodeTemplate

metadata:

name: default

spec:

subnetSelector:

karpenter.sh/discovery: ${CLUSTER_NAME} # 클러스터 이름으로 대체

securityGroupSelector:

karpenter.sh/discovery: ${CLUSTER_NAME} # 클러스터 이름으로 대체

EOF

✅ 참고 :

https://karpenter.sh/docs/getting-started/getting-started-with-karpenter/

Getting Started with Karpenter

Set up a cluster and add Karpenter

karpenter.sh

** 지우기 **

eksctl delete cluster --name $CLUSTER_NAME \ && aws cloudformation delete-stack --stack-name $CLUSTER_NAME

**참고**

https://techblog.gccompany.co.kr/karpenter-7170ae9fb677

Karpenter

안녕하세요. 여기어때컴퍼니 인프라개발팀에서 EKS(Elastic Kubernetes Service, AWS의 관리형 Kubernetes 서비스)를 담당하고 있는 젠슨입니다. 여기어때에서는 WorkerNode의 AutoScaling 도구로…

techblog.gccompany.co.kr

'AWS' 카테고리의 다른 글

| [ 14 ] - 보안 모니터링 (0) | 2024.10.10 |

|---|---|

| [ 13 ] - Karpenter 모니터링 (0) | 2024.10.08 |

| [ 11 ] - AWS EKS - Autoscaling (0) | 2024.10.04 |

| [ 10 ] - prometheus (0) | 2024.10.02 |

| [ 9 ] - 모니터링 (0) | 2024.09.30 |